What Are AI Agents? Definition, Types, Benefits & Risks

What are AI agents? Autonomous AI systems that perceive context and act to achieve goals. Explore their architecture, types, and how they reshape automation workflows.

AI agents are artificial intelligence systems that pursue defined goals with a degree of autonomy. They perceive context (data, constraints, and signals), decide and plan as needed, and take actions iteratively toward an objective within a defined scope. In many implementations, they are powered by AI models such as large language models (LLMs).

Unlike chatbots, agents don’t just generate outputs (text, images, audio, video). They can plan, use tools (databases, business applications, and workflows), update systems, coordinate multi-step processes, and keep working toward an objective within the constraints of permissions, policies, and human checkpoints.

This matters because AI is shifting from content generation to operational action. The upside is speed, efficiency, and scale across real workflows, but the trade-off is control: once software can act autonomously, errors, security gaps, and unclear accountability quickly become costly.

Organizations that ignore the agent shift risk doing one of two things: moving too slowly (missing compounding efficiency gains) or moving too recklessly (deploying autonomy without guardrails, auditability, and oversight).

This article defines AI agents, explains the operating loop and core components, covers enterprise use cases, benefits, and constraints, outlines the governance approach aligned with the NIST AI RMF, and discusses implications for leadership and implementation.

Executive Summary

What Is an AI Agent? Academic and Industry Views

At a high level, an AI agent is a software system that can perceive its environment, make decisions, and take actions to achieve a specific objective with a degree of autonomy. Unlike static programs that follow predefined steps, an AI agent adapts over time by sensing context, choosing actions, and adjusting its behavior based on outcomes.

More formally, an AI agent is a system that observes its environment via inputs, reasons about those observations, and acts on the environment via outputs to pursue goals. This framing is widely used in agent research and applied AI system design, even though definitions and boundaries can vary by author and application.

This perspective aligns with enterprise AI design principles that emphasize goal-oriented execution and tool use within governed workflows.

In practice, an AI agent does not always respond to a single prompt or trigger. It can maintain context over time, evaluate options, and select actions based on policies, learned behavior, and explicit objectives. Levels of sophistication vary widely, ranging from simple task agents operating under tight constraints to advanced systems that learn and adapt through experience.

For example, a customer support agent can classify and route tickets, retrieve relevant account history, propose a resolution, and escalate only when confidence is low. In operations, an agent can monitor signals (inventory, incidents, costs), recommend actions, and execute approved steps such as opening a work order or updating a dashboard.

An AI agent is defined by agency: the ability to take goal-directed actions within a defined scope, often with some autonomy. This is a key difference from chatbots, assistants, copilots, and traditional automation, which typically focus on responses or predefined workflows rather than independently selecting and executing actions.

Further Reading and Deep Dive Articles

AI Agent Principles: What Makes Agents Work

These principles describe how agents operate, what capabilities they must possess, and why they are considered agents rather than passive tools or isolated components.

Taken together, these define the minimum conditions under which a system can be reasonably described as an AI agent in enterprise or technical contexts.

1. Autonomy

Autonomy refers to an AI agent’s ability to operate without continuous human intervention. Once activated, the agent can make decisions and take actions independently within predefined boundaries.

More formally, an autonomous agent controls its own action selection based on internal logic, policies, or learned behavior rather than relying on step-by-step external commands. Autonomy does not imply unrestricted freedom; it is always constrained by design parameters, permissions, and governance controls.

In practice, autonomy enables AI agents to handle ongoing tasks, respond to events in real time, and operate at machine speed, all of which are essential for scalable systems.

2. Goal-Directed Behavior

AI agents are designed to pursue explicit objectives. These goals may be simple, such as completing a task, or complex, such as optimizing outcomes across multiple constraints.

From an enterprise perspective, goal-directed behavior means the agent evaluates possible actions based on how effectively they advance a desired outcome. This distinguishes agents from reactive programs that respond to inputs without an overarching objective.

Clear, well-defined goals are critical in enterprise environments to ensure predictable behavior, measurable performance, and alignment with organizational intent.

3. Perception and Context Awareness

Perception is the mechanism through which an AI agent gathers information about its environment. Inputs may include user interactions, system events, data streams, or signals from other agents and services.

Context awareness extends perception by enabling the agent to interpret inputs relative to prior states, constraints, or situational factors. Rather than treating each input in isolation, the agent maintains an internal representation of the surrounding context.

This capability allows AI agents to adapt their behavior as conditions change, rather than repeatedly executing the same response.

4. Reasoning and Decision-Making

Reasoning is the process by which an AI agent evaluates information and determines what action to take next. This may involve rule-based logic, probabilistic reasoning, optimization techniques, or model-driven inference.

Decision-making connects reasoning to execution. The agent selects among alternatives based on criteria such as expected outcomes, costs, risks, or priorities defined by its objectives.

In enterprise systems, constrained and explainable decision-making is especially important for trust, auditability, and policy compliance.

5. Learning and Adaptation

Some AI agents can improve their behavior over time through learning. Learning enables the agent to adjust its strategies based on feedback, observed outcomes, or new data.

More formally, learning allows an agent to modify internal parameters or decision policies to improve performance with respect to its objectives. Not all AI agents learn, but the ability to adapt is a defining feature of more advanced systems.

When applied carefully, learning helps agents manage variability and uncertainty, but it also introduces additional requirements for validation, monitoring, and governance.

Together, these principles provide a practical framework for understanding what makes an AI agent functionally distinct. They also offer a lens for evaluating whether a given system truly qualifies as an agent or is better described using another classification.

AI Agents vs Agentic AI vs Chatbots vs Assistants

While AI agents may incorporate elements of automation, conversational interfaces, or machine learning models, they are defined by a broader combination of autonomy, decision-making, and action.

AI Agent vs Agentic AI

Agentic AI is a broad term for systems designed to behave “agent-like.” It describes an approach in which AI can pursue goals with some autonomy, deciding what to do next and taking actions.

An AI agent is the concrete system that implements that approach. It operates with a defined goal, uses context and constraints, and runs an execution loop to plan and act in real workflows.

The key distinction is that agentic AI describes the capability or design pattern, while an AI agent is the deployed implementation that executes tasks. In other words, agentic AI is the “how,” while an AI agent is the “what” you run.

AI Agent vs Chatbots

Chatbots are primarily designed for conversational interaction. Most respond to user inputs using predefined flows or model-generated text, often within a single interaction session.

AI agents may include conversational interfaces, but conversation is not their defining feature. An agent can act without direct user interaction, initiate tasks, call tools, or coordinate with other systems to achieve objectives.

The key distinction is that chatbots are primarily designed for communication, while AI agents are designed to take goal-directed actions. Conversation is a possible capability, not the core function.

AI Agent vs AI Assistant

AI assistants are primarily designed to help users through interaction. Most respond to prompts by generating answers, summaries, or recommendations, and typically wait for the user to direct the next step.

AI agents may include an assistant-style interface, but assistance is not their defining feature. An agent can plan and execute tasks end-to-end, take actions across tools and systems, and continue working toward an objective with limited supervision.

The key distinction is that AI assistants are optimized for helpful responses and guidance, while AI agents are optimized for goal completion through action. An assistant supports the work, while an agent can do the work.

AI Agent vs Copilot

A copilot is primarily designed to assist a user while they work. Most copilots provide suggestions, drafts, or next-step recommendations inside a specific application, and the user remains the primary decision-maker and executor.

An AI agent may include a copilot-style interface, but assistance is not its defining feature. An agent can plan and execute tasks end-to-end, take actions across tools and systems, and operate with more autonomy once goals and constraints are set.

The key distinction is that a copilot keeps a human “in the loop” as the driver, while an AI agent can run workflows as the operator. A copilot accelerates your work; an agent can complete work on your behalf.

AI Agent vs Traditional Automation

Traditional automation typically executes predefined rules or workflows in response to specific triggers. Its behavior is deterministic and remains unchanged unless the underlying logic is manually updated.

An AI agent, by contrast, evaluates multiple possible actions and selects among them based on goals and context. Rather than following a fixed script, it operates within a decision space, allowing it to handle variability and exceptions more effectively.

In practice, automation is best suited to stable, repetitive tasks, while AI agents are better suited to dynamic environments where conditions change and judgment is required.

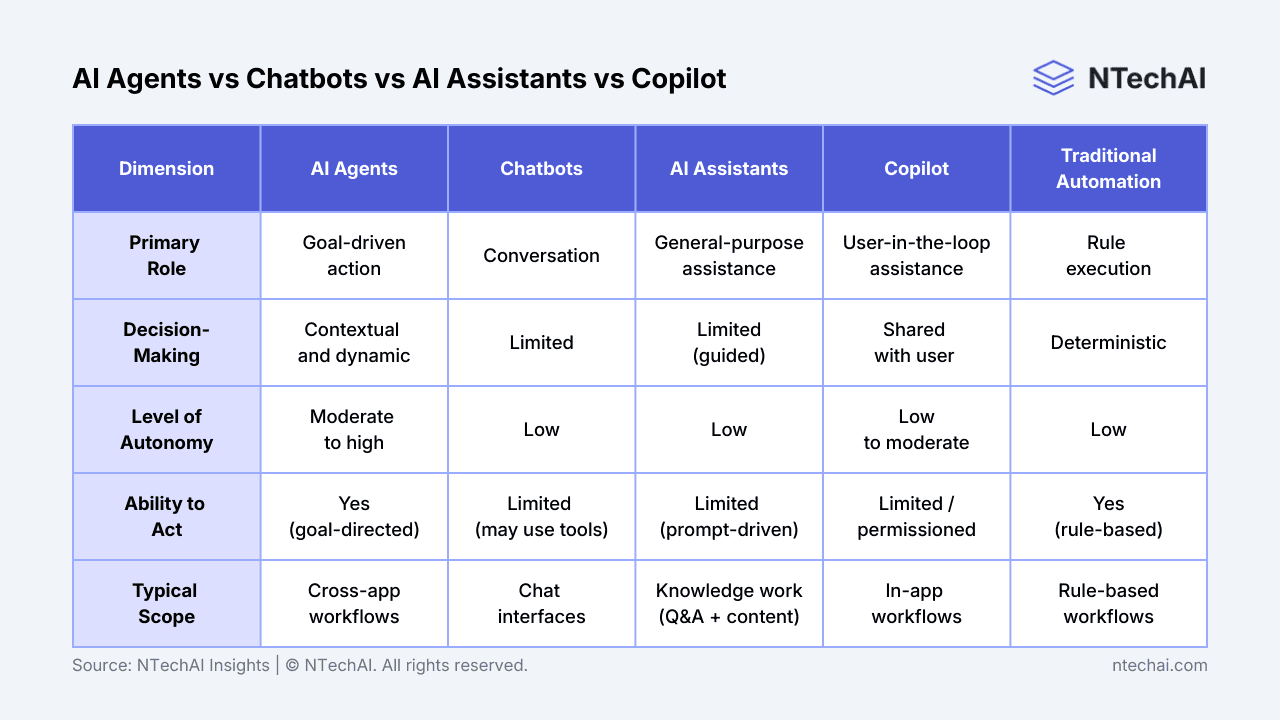

Comparison of common AI system categories across primary role, decision-making, autonomy, ability to act, and typical scope. Note: categories are conceptual; real implementations vary by design, permissions, and deployment context.

Taken together, these comparisons position AI agents as a distinct architectural pattern rather than a single technology. They sit at the intersection of intelligence, decision-making, and action, which explains both their potential and their complexity.

How AI Agents Work: Perceive-Plan-Act (with Feedback)

Though implementations vary, many AI agents follow a common conceptual loop that connects perception, reasoning, action, and feedback.

Together, these stages form a continuous cycle rather than a linear process. The agent is always observing, deciding, acting, and reassessing, enabling sustained, goal-directed behavior in dynamic environments.

Many enterprise systems follow established agentic architecture patterns, which structure AI agents around perception, planning, tool use, and feedback loops to coordinate complex tasks across systems.

1. Perception: Receiving Inputs

Perception is the stage where an AI agent gathers information about its environment. Inputs may come from user interactions, system events, databases, sensors, or application programming interfaces (APIs).

At this stage, the agent does not yet decide what to do. Its focus is on collecting, filtering, and normalizing relevant signals to ensure consistent interpretation.

Because all downstream decisions depend on these inputs, the quality and relevance of perception directly influence the agent’s overall performance.

2. Planning: Reasoning and Sequencing Actions

During planning, the AI agent interprets its inputs and evaluates possible courses of action. This can include applying rules, weighing probabilities, querying knowledge sources, or invoking AI models to generate options.

It then sequences the next actions toward the goal while accounting for constraints such as time, cost, risk, and policy limits. In more advanced implementations, the agent may simulate likely outcomes before selecting a plan.

Because planning determines what the agent will do next, this is where intelligence is most visible and where governance matters most. Guardrails, approvals, and policy controls keep the agent’s decisions aligned with safety, compliance, and intent.

3. Action: Executing Decisions

Action is the stage where the AI agent interacts with its environment. This may include sending messages, updating records, triggering workflows, calling external services, or delegating tasks to other agents.

Unlike passive systems, an AI agent’s actions can change the environment’s state. These changes then influence future perceptions, forming a continuous operating loop.

For this reason, actions are typically constrained by permissions and closely monitored for compliance and safety.

4. Feedback Loop: Learning from Outcomes

The feedback loop connects outcomes back into the agent’s internal state. The agent observes the results of its actions and uses that information to inform future behavior.

Feedback may be explicit, such as performance metrics or human evaluations, or implicit, such as changes in system state. In learning-enabled agents, this feedback can drive adaptation over time.

While feedback enables improvement, it also introduces complexity related to validation, model drift, and control.

Together, these stages form a continuous cycle rather than a linear process. The agent is always observing, deciding, acting, and reassessing, enabling sustained, goal-directed behavior in dynamic environments.

AI Agent Types: Reactive to Multi-Agent Systems

This section outlines the primary ways AI agents are commonly classified based on how they make decisions and adapt to their environment. These categories help frame design choices, capability trade-offs, and suitability for different enterprise use cases.

The classifications are conceptual rather than rigid. In practice, many real-world AI systems combine characteristics from multiple agent types.

Reactive AI Agents

Reactive agents respond directly to current inputs without maintaining an internal model of the environment. Their behavior is driven by condition–action rules that map observed states to predefined responses.

Because they do not reason about history or future outcomes, reactive agents are relatively simple and fast. They are well-suited to environments where conditions are predictable and decisions must be made with minimal latency.

However, the absence of memory and planning limits their ability to handle complex or evolving situations.

Goal-Based AI Agents

Goal-based agents select actions based on whether those actions move them closer to a defined objective. Rather than reacting immediately, they evaluate alternatives in light of desired outcomes.

This approach allows agents to handle tasks that require sequencing, prioritization, or adjustment when circumstances change. As long as the goal remains stable, the agent can adapt its behavior accordingly.

Goal-based agents are common in enterprise systems that must balance competing constraints while pursuing measurable outcomes.

Utility-Based AI Agents

Utility-based agents extend goal-based reasoning by assigning values to different outcomes. Instead of simply achieving a goal, the agent seeks to maximize overall utility based on preferences, costs, risks, or performance metrics.

This enables more nuanced decision-making when trade-offs are unavoidable. For example, an agent may select a slightly slower option if it reduces risk or resource consumption.

Utility-based agents are often used in optimization scenarios where multiple, sometimes conflicting, factors must be considered simultaneously.

Learning AI Agents

Learning agents improve their performance over time by adjusting their behavior based on experience. They use feedback from prior actions to refine internal models or decision policies.

More formally, learning allows an agent to reduce uncertainty and adapt to changes in its environment. Techniques may include reinforcement learning or supervised updates.

While learning increases flexibility, it also introduces challenges related to predictability, validation, and governance.

Multi-Agent AI Systems

Multi-agent systems consist of multiple AI agents that interact with one another, either cooperatively or competitively. Each agent operates independently, but collective behavior emerges from their interactions.

These systems are useful for modeling and managing complex environments such as markets, supply chains, or distributed infrastructure. Coordination, communication, and conflict resolution become central design concerns.

While powerful, multi-agent systems significantly increase architectural and governance complexity.

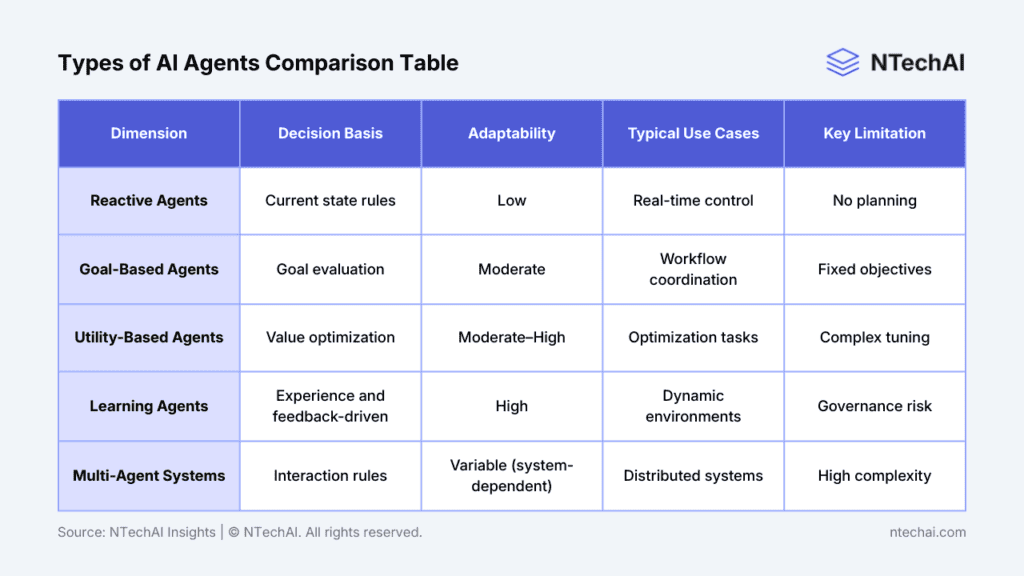

Conceptual comparison of common AI agent types based on decision-making approach, adaptability, typical use cases, and key limitations. Categories are illustrative rather than exhaustive.

These categories provide a structured way to think about AI agent capabilities and limitations. Selecting the right type, or combination of types, depends on the problem domain, operational risk, and level of autonomy required.

Benefits of AI Agents: Efficiency, Scale, and Speed

The benefits outlined here assume disciplined design, clearly defined objectives, and appropriate levels of oversight.

Operational Efficiency and Scale

AI agents can operate continuously and handle high volumes of tasks without proportional increases in human effort. This helps organizations scale operations while maintaining consistent performance and service levels.

By automating decision-driven workflows across systems, agents reduce manual handoffs, delays, and coordination overhead. In well-scoped processes, this can improve throughput and resource utilization, though evidence on GenAI productivity impacts shows results depend on task fit, adoption, and governance.

Efficiency gains are most pronounced in environments with repeatable decision patterns, reliable inputs, and clearly defined constraints, where actions can be executed and validated with minimal ambiguity.

Faster and More Consistent Decision-Making

AI agents evaluate information and execute decisions at machine speed, allowing them to respond to events in real time or near real time. This level of responsiveness is difficult to achieve through human-only processes.

Consistency is an equally important advantage. Agents apply the same logic and criteria across decisions, reducing variability caused by fatigue, distraction, or subjective judgment.

In regulated or high-volume environments, this consistency supports compliance and operational stability.

Continuous Availability

Unlike human operators, AI agents do not require rest, shifts, or coverage planning. They can operate continuously, maintaining service levels across time zones and demand fluctuations.

Continuous availability is particularly valuable for monitoring, incident response, and customer-facing systems that must remain responsive at all times.

This capability extends organizational capacity without eliminating the need for human expertise.

Personalization at Scale

AI agents can tailor their actions or responses to individual context, preferences, or historical data. This allows systems to deliver more relevant outcomes without manual customization.

Because personalization is managed algorithmically, agents can support large populations without linear increases in effort or cost.

Effective personalization depends on high-quality data and clearly defined behavioral boundaries.

Reduced Cognitive Load for Humans

By handling routine decisions and coordination tasks, AI agents free human workers to focus on higher-value activities. This can reduce cognitive overload and improve attention on complex or strategic work.

Rather than replacing human judgment, well-designed agents act as force multipliers by surfacing options, executing follow-ups, and managing background processes. This shift can improve both productivity and decision quality when roles and responsibilities are clearly defined.

These benefits explain why AI agents are increasingly adopted across industries. However, they are not automatic outcomes and depend on thoughtful implementation and alignment with organizational goals.

AI Agent Risks: Security, Reliability, and Control

While agents can deliver significant benefits, they also introduce new technical, operational, and organizational challenges that must be addressed deliberately.

Understanding these risks is essential for setting realistic expectations and designing effective controls.

Loss of Human Oversight

As AI agents become more autonomous, the risk increases that human supervision becomes too distant or infrequent. Decisions and actions may occur faster than humans can reasonably review in real time.

Without clear escalation paths and review mechanisms, errors can propagate undetected. This is especially concerning in environments where decisions carry financial, legal, or safety implications.

Maintaining meaningful oversight requires intentional design, not just the ability to intervene after the fact.

Bias, Ethics, and Accountability

AI agents may inherit biases from training data, decision rules, or feedback signals. When agents operate autonomously, these biases can be amplified across many decisions.

Accountability can also become unclear. Responsibility is difficult to assign when outcomes emerge from interactions among models, data, and system logic.

Addressing these concerns requires transparency, regular evaluation, and clearly defined ownership.

Security and Misuse Risks

AI agents often have access to systems, data, or tools that make them attractive targets for misuse. If compromised, an agent may be able to take harmful actions at scale.

Misuse can also occur unintentionally when agents are granted overly broad permissions or poorly defined objectives. In such cases, behavior may be technically correct but operationally undesirable.

Strong access controls, monitoring, and permission boundaries are essential safeguards.

Reliability and Hallucinations

When AI agents rely on probabilistic models, such as Large Language Models (LLMs), they may generate plausible but incorrect outputs. If these outputs drive actions, the consequences can extend beyond misinformation.

Reliability issues are particularly problematic when agents operate with limited supervision. Errors may go unnoticed until they cause a downstream impact.

Mitigation requires validation layers, fallback mechanisms, and conservative use of model-generated outputs in high-stakes contexts.

Integration and Governance Complexity

Deploying AI agents typically involves integrating multiple systems, data sources, and decision components. This increases architectural complexity and can strain existing governance frameworks.

Organizations may struggle to define policies for monitoring, auditing, and updating agents as they evolve. Without clear standards, operational overhead can increase rapidly. Effective governance must scale alongside agent capability.

These risks do not negate the value of AI agents, but they highlight the need for disciplined design and governance. Successful use depends as much on organizational readiness as on technical capability.

AI Agent Examples: Real-World Use Cases

This section illustrates how AI agents are applied in real-world contexts to perform meaningful work. Concrete examples help translate abstract concepts into practical patterns and clarify what distinguishes AI agents from simpler tools or automation.

The focus is on functional use cases rather than specific products or vendors.

Customer Support and Virtual Assistants

In customer support environments, AI agents can manage end-to-end interactions, including request triage, information retrieval, and follow-up actions. Unlike basic chat interfaces, these agents operate across multiple systems rather than within a single conversation window.

For example, an agent may identify an issue, check account status, open a service ticket, and notify the appropriate team without human intervention. Human staff can step in when cases exceed defined complexity or risk thresholds.

This approach improves response times while preserving escalation paths for nuanced or sensitive issues.

Autonomous Research and Analysis Agents

Research-oriented AI agents gather information from multiple sources, synthesize findings, and produce structured outputs such as summaries or reports. They can operate continuously, updating results as new data becomes available.

These agents are commonly used to monitor trends, scan large document collections, or analyze datasets that would be impractical to review manually. The agent determines what to collect, how to process it, and when to surface changes.

Human review remains essential for validating conclusions and interpreting implications.

Workflow and Business Process Automation

AI agents are increasingly used to coordinate complex workflows involving conditional logic and multiple systems. Unlike static automation, agents can adapt workflows based on context and intermediate outcomes.

For instance, an agent may manage an approval process by assessing risk, routing tasks to appropriate reviewers, and automatically following up. If conditions change, the agent can revise its approach without restarting the workflow.

This adaptability makes agents well-suited for dynamic operational environments.

Personal Productivity and Planning Agents

In productivity scenarios, AI agents assist with planning, prioritization, and follow-through. They can monitor calendars, track tasks, and suggest actions based on deadlines, dependencies, and changing conditions.

Rather than acting as passive reminders, these agents proactively manage workflows by rescheduling tasks, surfacing conflicts, or initiating preparatory steps when criteria are met.

The value lies in coordination and continuity, not in information delivery alone.

Enterprise Multi-Agent Systems

In large organizations, multiple AI agents may operate together as a coordinated system. Each agent performs a specific role, such as monitoring, optimization, or execution, while communicating with others as needed.

Examples include supply chain coordination, infrastructure management, or financial operations. Collective behavior emerges from defined interaction rules, often alongside orchestration.

These systems can deliver significant efficiency gains but require robust orchestration and governance.

AI Agent Best Practices: Design and Development

This section outlines practical guidelines for deploying AI agents responsibly and effectively. These best practices help organizations capture value while managing risk, complexity, and long-term maintainability.

The emphasis is on design discipline and operational readiness rather than specific tools or technologies.

Define Clear Objectives and Boundaries

Effective AI agents are built around explicit goals and well-defined operating limits. Objectives should be specific enough to guide decision-making and measurable enough to assess performance.

Boundaries are equally important. These include what the agent is allowed to do, which systems it can access, and when it must defer to human judgment.

Clear objectives and constraints reduce unintended behavior and make agent actions easier to audit and explain.

Keep Humans in the Loop

Human oversight remains critical, particularly for decisions with legal, financial, or ethical implications. AI agents should include defined checkpoints for human review, approval, or override of actions.

This does not require constant supervision. Instead, it relies on escalation paths, exception handling, and post-action review mechanisms.

Well-designed human-in-the-loop models balance autonomy with accountability.

Start Narrow, Then Scale

Organizations benefit from deploying AI agents incrementally. Starting with a narrow, well-scoped use case allows teams to validate behavior, measure impact, and refine controls.

As confidence grows, capabilities can be expanded or additional agents introduced. This staged approach reduces risk and avoids overengineering early deployments.

Scaling should follow demonstrated value rather than assumed potential.

Monitor Behavior and Performance

Continuous monitoring is essential once an AI agent is in operation. This includes tracking decision outcomes, error rates, and deviations from expected behavior.

Monitoring also supports early detection of drift, where an agent’s performance changes due to evolving data or conditions. Without visibility, issues may go unnoticed until they cause operational impact.

Effective monitoring combines automated metrics with periodic human review.

Design for Security and Compliance

AI agents often operate across sensitive systems and data. Security must be embedded from the outset rather than added after deployment.

This includes enforcing least-privilege access, logging actions, and ensuring compliance with applicable policies and regulations. Agents should be treated as operational actors rather than passive software components.

Strong security and compliance practices protect both the organization and the agent’s integrity.

These best practices reflect a broader principle: successful AI agents require organizational discipline as much as technical capability. Governance, clarity, and iteration are central to sustainable adoption.

Future of AI Agents: Trends and Outlook

This section examines how AI agents are evolving within enterprise and technology ecosystems. Rather than predicting specific outcomes, it focuses on observable directions shaping how agents are designed, governed, and integrated into organizations.

These developments reflect shifts in role, scale, and interaction rather than isolated technical advances.

From Tools to Digital Teammates

AI agents are increasingly positioned as active participants in workflows rather than passive tools. Instead of responding only when invoked, they operate alongside human teams, managing tasks, surfacing insights, and coordinating actions.

This shift changes how work is organized. Agents assume responsibility for defined functions, while humans focus on judgment, strategy, and exception handling.

A clear role definition is essential to prevent ambiguity between human and agent responsibilities.

Emergence of Agent Ecosystems

As adoption grows, AI agents are less likely to operate in isolation. Multiple agents with specialized roles are being designed to interact within shared environments.

These ecosystems enable the division of labor, in which agents collaborate or delegate tasks to one another. Coordination mechanisms, shared context, and communication protocols become critical to avoid conflict or inefficiency.

Managing agent ecosystems introduces new architectural and governance considerations.

Increased Autonomy with Stronger Governance

Advances in reasoning, planning, and system integration are enabling greater agent autonomy. At the same time, organizations are placing increased emphasis on guardrails, monitoring, and accountability.

Rather than indiscriminately maximizing autonomy, the trend is toward calibrated autonomy. Agents are given freedom within clearly defined operational envelopes.

This balance reflects growing awareness of both the power and the risk of autonomous decision-making.

Human–Agent Collaboration Models

Future deployments emphasize collaboration rather than replacement. AI agents augment human capabilities by handling coordination, analysis, and execution tasks that benefit from speed and consistency.

Humans remain responsible for setting objectives, interpreting outcomes, and making high-stakes decisions. Predictable behavior and transparency are essential for effective collaboration.

Designing for trust and usability becomes as important as technical performance.

Digital Labor: AI Agents as a Digital Workforce

In many organizations, AI agents are increasingly treated as digital labor (a managed operational workforce). They are assigned roles, monitored for performance, and governed through policies similar to those applied to human teams.

This framing supports clearer accountability and lifecycle management. It also reinforces the need for evaluation, retraining, and retirement processes for agents. Viewing agents as operational entities helps align technical capabilities with organizational structures.

These trends suggest that the future of AI agents is defined less by novelty and more by integration. Their impact will depend on how effectively they are embedded into systems, processes, and governance frameworks.

AI Agents: Implications for Leadership and Operations

AI agents shift AI from output generation to goal-directed action. The main question is no longer “How good is the model?” but where autonomy is appropriate and how it is controlled.

For Executives and Enterprise Leaders

Use AI agents when you need speed, scale, and coordination across systems, not just better responses.

Decisions to make upfront:

Green-light criteria before scaling:

For Operators, Architects, and Practitioners

Treat agents as systems, not models. Reliability comes from orchestration, constraints, and monitoring.

Operational must-haves:

Common failure modes:

Bottom line: AI agents deliver value when autonomy is scoped, actions are governed, and accountability is explicit.

Sources

Institutional & Standards References

[1] National Institute of Standards and Technology (NIST) (2023). “Artificial Intelligence Risk Management Framework (AI RMF 1.0)”. [View Source] Supports: Governance and risk controls for agentic systems.

Academic & Research References

[2] Calvino, F. et al. (OECD) (2024). “The effects of generative AI on productivity, innovation, and entrepreneurship”. [Linked Above] Supports: Evidence on productivity impacts and adoption conditions.

[3] Franklin, S. & Graesser, A (Univ. of Memphis, 1996). “Is it an Agent, or just a Program?: A Taxonomy for Autonomous Agents”. [Linked Above] Supports: Criteria distinguishing agents from programs.

[4] Russell, S. & Norvig, P (UC Berkeley, 2022). “Artificial Intelligence: A Modern Approach (4th Edition)”. [View Source] Supports: Canonical framing of intelligent agents.

[5] Yao, S. et al (Princeton/Google DeepMind, 2023). “ReAct: Synergizing Reasoning and Acting in Language Models”. [View Source] Supports: Reasoning-plus-action in tool-using workflows.

[6] Acharya, D. et al. (IEEE Access, 2025). “Agentic AI: Autonomous Intelligence for Complex Goals—A Comprehensive Survey”. [View Source] Supports: Research synthesis on agentic AI capabilities and limits.

Enterprise & Analyst References

[7] IBM (Think Blog, 2026). “What Are AI Agents?”. [Linked Above] Supports: Enterprise definition of agents and workflow boundaries.

[8] Google (Google Cloud, 2026). “Multi-agent AI system in Google Cloud”. [Linked Above] Supports: Reference architecture patterns for multi-agent systems.

[9] Gartner (2024). “How Intelligent Agents in AI Can Work Alone”. [View Source] Supports: Analyst framing of intelligent agent scope and enterprise use.

Notes

Editorial Notes

This article provides a decision-support analysis. It is written to improve clarity, accuracy, and real-world application for enterprise leaders, operators, and technical practitioners.

Where concepts or terminology vary across academic, industry, or regulatory sources, we prioritize definitions and framing that are broadly accepted and operationally meaningful.

AI & Editorial Disclosure

AI tools were used to support research, outlining, structural organization, and drafting.

All content was reviewed, fact-checked, and edited by human reviewers to ensure accuracy, context, and alignment with current engineering and governance standards. No part of this article was published without human oversight.

Corrections & Accountability

If you find a factual error, a source issue, or outdated information, please contact us at info@ntechai.com.

To learn more about our mission and editorial standards, visit our About page, or explore NTechAI Insights for ongoing analysis.

Rights & Attribution

© NTechAI. All rights reserved. Portions of this content may reference open research and academic concepts credited to their respective authors. All original content is protected by copyright law and may not be reproduced without permission, except as permitted by law.