The AI Ecosystem: Strategy, Stack & Responsible AI

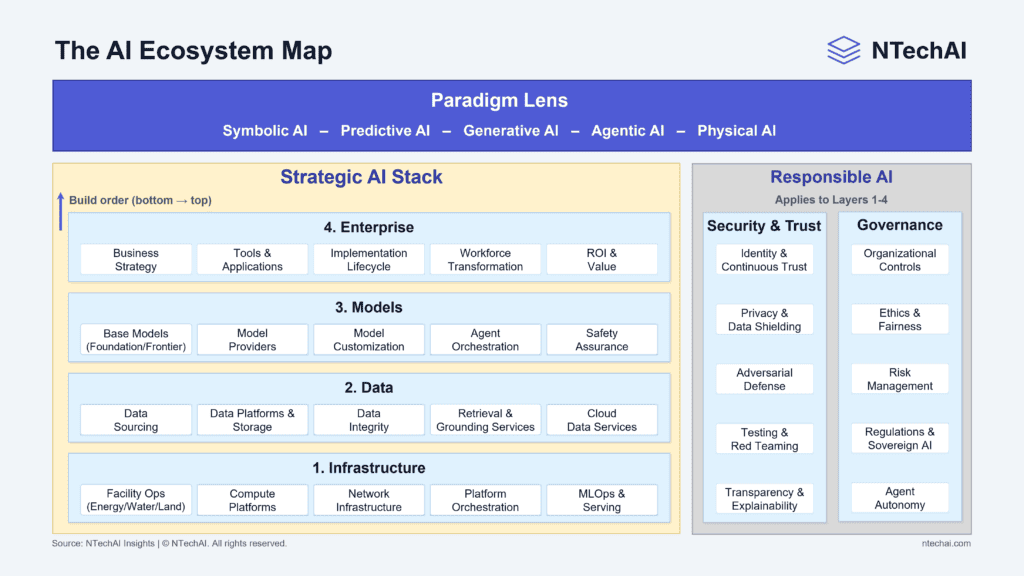

A decision-support guide to the AI ecosystems. Shows how infrastructure, data, models, and governance connect, and where the generative AI ecosystem fits for safe use.

Executive Summary

Artificial intelligence (AI) is shifting from isolated pilots to enterprise production. That shift requires leaders to think in systems, not tools. This guide provides a clear map of the AI ecosystem so you can design, deploy, and scale AI quickly, with control and measurable business value.

Who this is for: Executives, technology leaders, product owners, and risk leaders who need a shared model for enterprise AI strategy. It is beneficial for teams moving from prototype to production, scaling large language model (LLM) applications, or standardizing responsible AI practices.

What’s inside: A decision-grade AI ecosystem model spanning the Strategic AI Stack (infrastructure, data, models, and enterprise adoption), Responsible AI Overlays (security, trust, and governance), and the Paradigm Lens (symbolic, predictive, generative, agentic, and physical). Each section is written to support prioritization, sequencing, and linking to deeper playbooks.

Key Takeaways

Introduction: The Industrialization of Intelligence

AI is entering an industrial phase. Capabilities once limited to specialized research teams are now delivered through platforms, APIs (Application Programming Interfaces), and reusable building blocks, including foundation models and enterprise-grade MLOps.

This changes what matters. Winning is less about adopting the newest model and more about building a repeatable system that connects data, models, workflows, and people with the right security and governance controls.

This ecosystem framework is designed for that reality. It gives leaders a shared language to align investments, manage risk, and scale AI into real operations, while supporting consistent execution across technology and risk teams.

What Is an AI Ecosystem

An AI ecosystem is the full set of capabilities required to deliver AI outcomes reliably in the real world. It includes infrastructure, data platforms, models, workflows, people, and controls that together turn AI from a demo into a repeatable business capability.

In enterprise terms, an AI ecosystem is an operating system for intelligence. It explains how AI is built, deployed, governed, secured, and improved over time, across the entire AI lifecycle from data pipelines to production monitoring.

A strategic view of how AI is built, governed, and scaled, from foundational infrastructure and data to models, enterprise adoption, and responsible AI overlays.

The NTechAI Model for an AI Ecosystem

The NTechAI model organizes AI into three complementary views that leaders can use to align strategy and execution.

1) Strategic AI Stack (Layers 1–4): This is the build order. AI infrastructure provides compute, networks, and platform orchestration. The AI data ecosystem turns raw inputs into trusted, retrievable context. The AI models ecosystem converts that context into reasoning, generation, and action. The enterprise AI ecosystem drives adoption, integration, and measurable value.

2) Responsible AI Overlays: These are cross-cutting controls that apply wherever AI runs. Security & trust focus on protection, resilience, and verifiable operation. Governance focuses on decision rights, ethics and fairness, risk management, compliance, and boundaries of agent autonomy.

3) Paradigm Lens: This is the “how it behaves” lens across everything. Symbolic, predictive, generative, agentic, and physical AI paradigms shape expectations, failure modes, and required controls. Using the wrong paradigm assumptions is one of the fastest ways to create poor ROI or unmanaged risk.

Together, these three views act like a practical AI reference architecture. They help leaders connect technology choices to operating model decisions, and they provide a shared language across business, engineering, and risk teams.

How to Use This Map: A Decision Guide

Use the map in two ways: diagnose and sequence.

Diagnose: If your AI initiative stalls, identify which layer is actually failing. Many “model problems” are data-integrity problems, retrieval and grounding problems, MLOps deployment problems, or workflow-adoption problems. Trace the issue down the stack until you find the real bottleneck.

Sequence: If you are planning an investment, decide which layer to strengthen first to unlock the next. Build minimum viable foundations (platform, data, evaluation) before scaling model usage. For agentic workflows, prioritize identity, tool access controls, monitoring, and human oversight early.

A good executive shortcut is to ask three questions for any AI program:

What layer is this primarily dependent on?

What controls must be in place for us to trust it?

What operating model makes it sustainable at scale?

As you continue, each ecosystem section will unpack one part of the map in a consistent format so you can skim for decisions now and dive deeper later.

AI Paradigms: A Lens Across the Entire AI System

AI paradigms describe the main ways intelligence is represented and applied in systems. They are not layers in a stack. They are design approaches that shape how models behave, what “correct” looks like, and which risks matter most.

Why it matters: Paradigms determine expectations, controls, and operating models. When leaders confuse paradigms, they misprice risk, overinvest in the wrong capabilities, and apply the wrong trust model. Getting the paradigm right helps align use cases, evaluation, governance, and value creation with how the system actually behaves in production.

Major AI Paradigms: From Prediction and Generation to Autonomous Systems

1. Symbolic AI: Rules, Logic, Search, and Knowledge Graphs

Symbolic AI represents intelligence through explicit rules, logic, and structured relationships. It relies on human-defined knowledge, such as policy rules, ontologies, and knowledge graphs, rather than learned statistical patterns.

This paradigm applies to domains that require traceability and deterministic reasoning, such as compliance rule engines, eligibility logic, and workflow constraints. It is often paired with modern machine learning (ML) in hybrid and neuro-symbolic systems when organizations need both learning and explainability.

2. Predictive AI (or Discriminative AI): Classification, Forecasting, and Scoring

Predictive AI uses machine learning to map inputs to outputs, such as classifying transactions, forecasting demand, scoring risk, or detecting anomalies. It includes both classic ML and deep learning models trained for specific tasks, with clear performance metrics such as accuracy, precision/recall, and calibration.

This paradigm is strong when the target is known and measurable. It is less suitable for free-form language generation, reasoning over ambiguous instructions, or multi-step task execution.

3. Generative AI: LLMs, Content Generation, and Multimodal Synthesis

The generative AI ecosystem produces outputs such as text, code, images, audio, and video, and in the enterprise, it powers assistants, summarization, drafting, knowledge search interfaces, and content workflows.

Because generative systems can sound confident even when wrong, they typically require grounding via retrieval, safety controls, and evaluation beyond traditional predictive metrics. This is where techniques like RAG (Retrieval-Augmented Generation), policy guardrails, and human review protocols become central.

4. Agentic AI: Planning, Tool Calling, and Workflow Execution

Agentic systems extend generative capabilities into autonomy and tool use, as detailed in a comprehensive survey of agentic AI architectures and paradigms. Instead of only answering, AI agents can execute workflows, such as creating tickets, pulling data, updating records, or coordinating multi-step tasks.

This paradigm changes the risk surface. The key questions become tool access, agent identity, autonomy boundaries, monitoring, and rollback. Leaders should treat agentic systems more like intelligent automation than like chat interfaces.

5. Physical AI: Robotics, Edge AI, and Embodied Intelligence

Physical AI applies intelligence to devices that sense and act in the real world. It includes robotics, autonomous vehicles, drones, and industrial systems that combine perception, control, and decision-making.

Because physical AI can cause real-world harm, it brings additional safety, reliability, testing, and liability considerations. It also increases the importance of edge inference, real-time constraints, and resilience under imperfect data and connectivity.

Decisions & Next Steps

Further Reading / Deep Dive Articles

Paradigms explain how intelligence behaves. Infrastructure determines whether that intelligence can run reliably, securely, and cost-effectively in real operations. Next, we move from concepts to the physical and digital foundation required for AI at scale.

AI Infrastructure Ecosystem: The Physical and Digital Foundation of AI

The AI Infrastructure Ecosystem is the foundation that runs AI workloads end-to-end, from training and fine-tuning to inference and production serving. It includes facilities, compute, networks, orchestration, and runtime operations that enable AI systems to perform, be resilient, and be secure.

Why it matters: Infrastructure choices determine whether AI is fast and dependable or slow and fragile. They directly shape latency, throughput, uptime, security posture, and unit economics, including the real cost per model call, per-agent workflow, and per-customer experience.

Core Components of the AI Infrastructure Ecosystem

1. Facility Operations: Power, Cooling, Water, and Space

Facility operations provide the physical environment that keeps AI compute running safely and continuously. This includes power capacity and redundancy, cooling design, water usage, and space constraints for racks and high-density hardware.

As AI workloads grow more energy-intensive, facility limits increasingly dictate what can run where and how quickly capacity can scale. For many organizations, facility readiness becomes a hidden bottleneck for AI expansion.

2. Compute Platforms: CPU, GPU, Accelerators, and High-Performance Storage

Compute platforms provide the processing power required for AI workloads, including CPUs (Central Processing Units) for orchestration and GPUs (Graphics Processing Units) optimized for AI training and inference, supported by high-throughput storage and efficient data access paths.

Compute strategy is a core driver of performance and cost. Many enterprises adopt a portfolio approach: premium GPUs for high-value workloads, and cost-efficient compute for routine or bursty demand.

This approach is consistent with Intel’s guidance on AI infrastructure strategy and compute selection for enterprise-scale, which emphasizes workload-specific sizing, orchestration, and lifecycle cost management.

3. Network Infrastructure: Bandwidth, Latency, and Security Boundaries

Network infrastructure connects data, models, users, tools, and environments across regions and clouds. It governs bandwidth and latency, segmentation, secure connectivity, and the boundaries that protect critical systems.

Weak network design can negate expensive compute investments. Strong network foundations enable distributed training, real-time inference, secure agent tool access, and reliable cross-region operations.

4. Platform Orchestration: Clusters, Schedulers, and Containers

Platform orchestration controls how AI workloads are scheduled, scaled, isolated, and managed across compute resources. It includes clusters, schedulers, containerization, and standardized runtime environments that support repeatable deployments.

This layer is what turns infrastructure into a usable platform. It improves utilization, reduces environment drift, and enables consistent operations across development, staging, and production.

5. MLOps And Serving: CI/CD, Registry, Deployment, Observability, and FinOps

MLOps and serving operationalize AI systems in production. This includes CI/CD (Continuous Integration / Continuous Deployment) for models, model registries, deployment workflows, inference runtimes, monitoring and observability, and FinOps (Financial Operations) cost controls.

Without MLOps, AI becomes difficult to update, test, and govern. With it, enterprises can manage model versions, detect drift, trace incidents, and scale AI workloads with predictable reliability and cost visibility.

Trade-Offs/Pitfalls

Many organizations overinvest in raw GPU capacity while underinvesting in orchestration and operations, leading to idle compute and runaway costs. Others optimize for speed at the expense of resilience, creating fragile systems that fail under load.

A common executive mistake is treating AI infrastructure as a one-time build rather than a product platform that must evolve with workload growth and governance needs.

Decisions & Next Steps

Infrastructure provides the horsepower and reliability. Data determines whether that power produces accurate, relevant outcomes. Next, we move to the AI data ecosystem and how enterprises turn raw information into trusted, retrievable context for models and agents.

AI Data Ecosystem: Turning Raw Data Into Trusted Intelligence

The AI Data Ecosystem is the set of capabilities that turns raw enterprise information into usable, trusted context for AI systems. It covers how data is sourced, stored, validated, retrieved, and delivered so models and agents can produce accurate, relevant outputs.

Why it matters: AI quality is capped by data quality and data access. Weak data foundations increase the chance of incorrect or inconsistent outputs and elevate compliance risk. Strong data ecosystems improve accuracy, speed to production, and confidence in AI-assisted decisions across the business.

Core Components of the AI Data Ecosystem

1. Data Sourcing: Structured, Unstructured, and Streaming

Data sourcing is the process by which information enters an AI system from databases, business applications, documents, devices, and external feeds. It includes structured records (ERP, CRM), unstructured content (PDFs, emails, policies), and streaming events (IoT, clickstreams).

Good sourcing prevents “partial truth” AI by ensuring models have access to the same operational reality leaders expect, not isolated snapshots or stale knowledge.

2. Data Platform & Storage: Lakes, Warehouses, Object Storage, Catalogs, and Vector DB

Data platform and storage provide the backbone for organizing and retaining AI-relevant information. This includes data lakes and warehouses, object storage, metadata catalogs, and increasingly vector databases for embeddings and semantic search.

The goal is not just storage volume. It is discoverability, performance, retention and backup, and the ability to serve both analytics and AI workloads consistently.

3. Data Integrity: Quality, Permissions, and Compliance/Lineage

Data integrity ensures the right data is used, at the right quality level, by the right users and systems. It includes quality validation, deduplication signals, access controls, and lineage tracking that shows where data came from and how it was transformed.

This is where many enterprise AI programs succeed or fail. Industry guidance on what AI-ready data means for enterprise AI success underscores that trusted, accessible, and governed data is foundational to reliable AI outcomes at scale.

4. Retrieval & Grounding Services: Embeddings, Indexing, and RAG/MCP

Retrieval and grounding services connect models to trusted knowledge at runtime. Using embeddings, indexing, and retrieval pipelines, RAG systems fetch relevant context for prompts, such as policies, product documentation, contracts, or ticket histories.

As AI systems become more agentic, MCP (Model Context Protocol) introduces a standardized way for models to access external context and tools at runtime. MCP complements RAG by reducing the need for custom integrations and improving workflow consistency.

Grounding improves relevance and reduces the chance of confidently wrong answers, especially in fast-changing domains. It also supports auditability by showing which sources informed a response.

5. Cloud Data Services: Managed Services, Integration Patterns, and Tenancy

Cloud data services provide managed capabilities for ingestion, processing, storage, and integration across environments. They help teams scale data pipelines without rebuilding core components and support multi-account or multi-tenant structures for security, cost allocation, and organizational separation.

This layer matters most when enterprises run hybrid architectures, integrate multiple business units, or need consistent patterns for governed data sharing.

Trade-Offs/Pitfalls

Many organizations over-index on “centralizing all data,” creating bottlenecks that slow teams down when shipping AI. Others build large storage estates without metadata, lineage, or retrieval design, making data hard for AI to use.

A common mistake is treating RAG as a quick plug-in instead of a product-grade retrieval service with indexing, evaluation, and change management.

Decisions & Next Steps

Once your data is usable and retrievable, models can finally apply it consistently. Next, we move to the AI models ecosystem, where base models, customization, orchestration, and assurance determine what AI can do and how safely it can operate in production.

AI Models Ecosystem: From Foundation Models to Agent Orchestration

The AI Models Ecosystem is how enterprise AI capability is created, accessed, adapted, and coordinated. It spans base models (foundation, frontier, and small models), the providers that deliver them, customization methods that align models to your domain, orchestration that turns models into workflows and agents, and assurance that keeps performance reliable over time.

Why it matters: Model choices determine what AI can do, how much it costs to run, and how dependable it is in production. At this layer, leaders manage trade-offs across accuracy, latency, vendor dependence, controllability, and the trust required to use AI in high-impact workflows.

Core Components of the AI Models Ecosystem

1. Base Models: Foundation Models, Frontier Models, and Small Language Models

Base models are the core “brains” that power AI features such as reasoning, generation, and summarization. They range from large foundation and frontier models that handle complex tasks to smaller language models optimized for speed, privacy, or high-volume use.

Most enterprises benefit from a portfolio approach. Use high-capability models for complex reasoning and long-form tasks, and smaller models for routine classification, extraction, or internal copilots where cost and latency matter.

2. Model Providers: Access, Licensing, Hosting, and Updates

Model providers are the organizations and platforms that supply models via APIs, managed services, or self-hosted distributions. This includes pricing, licensing terms, data-handling policies, regional availability, and model-update channels.

Provider decisions shape long-term flexibility. They affect lock-in risk, the ability to run models in specific jurisdictions, how updates are managed, and whether model behavior changes without your control.

3. Model Customization: Fine-Tuning, Adapters, and Grounding Integration

Model customization aligns a general-purpose model to a specific domain, brand voice, or workflow. Techniques include fine-tuning, lightweight adapters such as LoRA (Low-Rank Adaptation), and grounding integration that injects retrieved enterprise context at runtime.

A useful executive rule of thumb is: fine-tune for stable behavior and style, ground for changing knowledge. Many teams get better results by combining both, with clear evaluation gates.

4. Agent Orchestration: Workflows, Model Routing, and Tool Use

Agent orchestration turns models into systems that can execute tasks reliably, using standardized interfaces for tool access and context delivery, such as emerging protocols like MCP.

It includes workflow design, prompt patterns, model routing (sending the right task to the right model), and controlled use of tools, such as calling enterprise APIs, databases, or ticketing systems.

This is where AI shifts from “answers” to “actions.” When done well, orchestration improves reliability and reduces costs by making AI behavior repeatable and selecting the lowest-cost model that meets the task requirements.

5. Safety Assurance: Evaluation, Guardrails, and Quality Monitoring

Safety assurance ensures that model outputs remain correct, useful, and aligned with expectations throughout development and production. It includes evaluation harnesses, benchmark tests, regression checks, policy and quality guardrails, and monitoring for drift or degradation.

This component is the evidence layer. It helps decide where AI can be trusted, which use cases need human review, and when updates should be rolled back or re-evaluated.

Trade-Offs/Pitfalls

Many organizations default to a single large model for everything, only to be surprised by cost and latency. Others over-invest in fine-tuning when retrieval and grounding would better handle changing knowledge.

A frequent failure mode is launching agent workflows without a strong evaluation baseline, which makes behavior unpredictable and hard to manage at scale.

Decisions & Next Steps

Once models are selected and operationalized, value depends on where they are deployed and how work changes around them. Next, we move to the enterprise AI ecosystem, where strategy, applications, delivery lifecycle, workforce adoption, and value measurement turn AI capability into business outcomes.

Enterprise AI Ecosystem: Turning AI Capability Into Business Value

The Enterprise AI Ecosystem is the set of leadership, delivery, and adoption capabilities that turn AI technology into measurable outcomes. It connects business strategy, applications, implementation pathways, workforce change, and value measurement so AI moves from pilots to durable, enterprise-wide impact.

Why it matters: Most AI programs fail for non-technical reasons. Without clear priorities, ownership, integration into workflows, and credible ROI measurement, even strong models and data stacks yield costs and activities without sustained value.

Core Components of the Enterprise AI Ecosystem

1. Business Strategy: Use-Case Portfolio, Priorities, and Vertical vs Horizontal

Business strategy defines where AI should be applied and what “success” means. This includes selecting a use-case portfolio, choosing vertical bets (deep in a single function) versus horizontal platforms (shared across the enterprise), and aligning investments with core business priorities.

A clear strategy reduces fragmentation and prevents teams from building disconnected solutions that cannot scale or be governed consistently.

2. Tools & Applications: AI Products, Embedded Workflows, APIs, and Interoperability

Tools and applications are where users experience AI in practice. This includes general-purpose AI tools, embedded AI features inside enterprise systems, API-based integrations, and connectors that enable interoperability across the tech stack.

The goal is not more tools. It is usable AI that fits real workflows, reduces friction, and integrates with systems of record, identity, and data access patterns.

3. Implementation Lifecycle: Pilot to Production, Build/Buy/Partner, and Integration

The implementation lifecycle is the process by which AI solutions are delivered, scaled, and maintained. It covers piloting, validation, architectural choices, enterprise integration, and the build vs. buy vs. partner decision.

A disciplined lifecycle prevents pilot purgatory. It also clarifies who owns what, how changes are deployed, and how solutions are supported once they become operational.

4. Workforce Transformation: Adoption, Change Management, and Digital Labor

Workforce transformation is how work actually changes when AI arrives. It includes change management, task redesign, reskilling, and clarifying human–AI collaboration, including review roles for high-impact decisions.

A core shift is the introduction of digital labor: AI systems that execute tasks or workflows previously performed by humans. As digital labor expands, human roles move toward judgment, oversight, exception handling, and continuous improvement rather than routine execution.

Adoption is not automatic. It improves when AI is positioned as a practical augmentation, when incentives support human–AI collaboration, and when operating models clearly define where digital labor can act independently and where human approval is required.

5. Measuring ROI & Value: Business Outcomes, Adoption Metrics, and Performance KPIs

Measuring ROI and value is the discipline of proving AI impact and guiding iteration. It includes hard ROI (cost reduction, revenue lift, time savings) and soft ROI (decision quality, customer experience, cycle-time reduction), as well as adoption and operational metrics.

Strong measurement creates focus. It helps leaders decide what to scale, what to stop, and where to invest additional resources in data, MLOps, or training to compound value.

Trade-Offs/Pitfalls

Leaders often start with tools instead of strategy, then struggle to align use cases and ownership. Another common pitfall is launching many pilots without a clear path to production, support, and measurement. Many teams also underinvest in adoption and process redesign, then blame models for results that are actually workflow issues.

Decisions & Next Steps

As AI reaches customers and mission-critical workflows, trust becomes a growth constraint, not a nice-to-have. Next, we move to the AI security and trust ecosystem, where identity, privacy, adversarial defense, testing, and transparency protect AI systems at scale.

AI Security & Trust Ecosystem: Protecting AI Systems at Scale

The AI Security & Trust Ecosystem comprises the technical controls that protect AI systems in production. It covers identity, privacy protections, adversarial defenses, validation testing, and transparency mechanisms that help keep AI behavior reliable, secure, and auditable as systems scale.

Why it matters: As AI becomes embedded in core workflows and agentic systems can take actions, failures scale quickly. Strong trust controls reduce the likelihood of data leakage, misuse, unsafe outputs, and operational disruption, while enabling faster, more confident deployment across the enterprise.

Core Components of the AI Security & Trust Ecosystem

1. Identity & Continuous Trust: IAM, Agent Identity, Telemetry, and Incident Response

Identity and continuous trust ensure only authorized users, services, and AI agents can access models, data, and tools. This includes IAM (Identity and Access Management) and SSO (Single Sign-On), least-privilege permissions, service-to-service authentication, secrets management, and continuous telemetry.

For agentic AI, identity extends to “who is acting” and “what is allowed.” Monitoring and incident response close the loop by detecting abnormal behavior and enabling rapid containment and recovery.

2. Privacy & Data Shielding: Encryption, DLP, PII Controls, and Privacy-Preserving ML

Privacy and data shielding protect sensitive data across ingestion, storage, retrieval, and inference. This includes encryption, key management, data loss prevention, PII (Personally Identifiable Information) controls, retention policies, and privacy-preserving techniques such as federated learning and differential privacy, where appropriate.

The practical aim is simple: reduce exposure of sensitive information while preserving utility. That makes privacy a performance enabler for regulated use cases, not just a compliance checkbox.

3. Adversarial Defense & Guardrails: Prompt Injection, Jailbreaks, and Misuse Controls

Adversarial defense protects AI systems from manipulation and unsafe behavior. It includes defenses against prompt injection, jailbreak attempts, data exfiltration patterns, model evasion, and misuse scenarios, as well as runtime guardrails that enforce policy and safety boundaries.

This is where “LLM app security” differs from traditional application security. The user’s intent is executable, so defenses must anticipate hostile prompts and tool misuse, not just malicious code.

4. Testing & Red Teaming: Abuse Simulation, Pen Testing, and Supply-Chain Validation

Testing and red teaming surface failures before they reach customers or attackers find them first. This includes adversarial testing, abuse- and misuse-simulation testing, penetration testing of the surrounding application, and supply-chain validation of third-party models, containers, and dependencies.

Supply-chain controls such as SBOMs (Software Bills of Materials), provenance checks, and update validation reduce the risk of compromised artifacts entering production environments.

5. Transparency & Explainability (XAI): Documentation, Traceability, and Audit Logs

Transparency and explainability provide visibility into how AI systems behave and why outcomes occurred. This includes documentation such as model cards, decision traceability, audit logs, and explainable AI techniques, where appropriate for the use case.

These signals support debugging, incident investigations, and stakeholder trust. They also help teams answer practical questions, such as which model version produced the output and which sources informed the response.

Trade-Offs/Pitfalls

Teams often implement traditional cybersecurity controls but overlook AI-specific threats, including prompt injection, misuse of tools, and retrieval-based data leakage. Overly strict guardrails can reduce usability and adoption, while weak monitoring leaves failures undetected until they become incidents.

A common mistake is treating trust as a one-time checklist rather than a continuous capability supported by testing, telemetry, and improvement loops.

Decisions & Next Steps

Security and trust controls protect systems in practice. Governance defines who is accountable, what is allowed, and how risk is managed across the organization. Next, we move to the AI governance ecosystem and the operating model leaders need to scale AI responsibly.

AI Governance Ecosystem: Accountability, Ethics, And Control At Scale

The AI Governance Ecosystem is how an organization sets accountability, decision rights, and risk boundaries for AI across the full lifecycle. It defines who can deploy AI, what is permitted, how impact is assessed, how autonomy is controlled, and how regulatory obligations are met as AI scales.

Why it matters: Governance is what lets AI move from interesting pilots to durable, high-trust systems. Without it, organizations face fragmented decision-making, unmanaged autonomy, inconsistent standards, and avoidable legal and reputational exposure that can stall adoption or force rollbacks.

Core Components of the AI Governance Ecosystem

1. Organizational Controls (Operating Model, Roles/RACI, Decision Rights, Approval Gates)

Organizational controls define how AI decisions get made and who owns outcomes. This includes the operating model, RACI roles, approval gates, documentation standards, escalation paths, and lifecycle accountability from design through retirement.

Strong controls reduce shadow AI and clarify ownership, especially when multiple business units deploy AI in parallel. They also make it clear where human oversight is required and who signs off when risk increases.

2. Ethics & Fairness (Principles, Bias, Human Impact, Oversight Norms)

Ethics and fairness establish the norms that guide AI use beyond technical performance. This includes expectations regarding fairness and bias, human-impact considerations, transparency, and standards for human oversight of sensitive decisions.

The objective is practical: avoid harm, reduce unfair outcomes, and ensure AI use aligns with organizational values and customer trust, even when the model appears to “perform well” on average metrics.

3. Risk Management & Assessments (Use-Case Tiering, Impact Assessments, Vendor Intake)

Risk management defines how AI risks are identified, categorized, and mitigated before deployment. It includes use-case tiering, AI impact assessments, model and data risk reviews, third-party/vendor intake, and control selection proportional to risk.

This is where governance becomes actionable. It translates “trustworthy AI” into specific requirements per use case, such as human-in-the-loop review, monitoring intensity, or restricted tool access for agents.

4. Regulations & Sovereign AI (Regulatory Mapping, Data Residency, Cross-Border Rules)

Regulations and sovereign AI address how AI systems operate across jurisdictions and industries. This includes regulatory mapping to frameworks such as the EU AI Act, alignment with risk management guidance such as the NIST AI RMF, and data residency, cross-border processing rules, and sector-specific requirements.

This component ensures the AI strategy is deployable in the real world. It prevents late-stage compliance surprises that force re-architecture, vendor changes, or withdrawal from certain markets.

5. Agent Authorization & Autonomy (Delegation Policies, Autonomy Boundaries, Human-in-the-Loop)

Agent authorization defines what AI agents are allowed to do, where autonomy starts and stops, and when humans must intervene. It includes delegation policies, autonomy boundaries, tool permissions, approval workflows, and controls to reduce shadow agents operating outside governance.

As agents move from answering questions to taking actions, this becomes a core leadership decision. Well-defined autonomy boundaries unlock speed while preventing runaway behavior and unclear liability.

Trade-Offs/Pitfalls

Governance can become too rigid, slowing delivery and pushing teams toward shadow AI and workarounds. But overly light governance creates inconsistent controls and unmanaged risk, especially with agentic systems that can act and chain tools.

A common mistake is writing policies without embedding them into real delivery workflows, where approvals, assessments, and monitoring actually happen.

Decisions & Next Steps

With governance in place, the ecosystem map becomes an operating tool, not just a concept. Next, we tie the full framework together and show how leaders can sequence investments across infrastructure, data, models, and enterprise adoption while applying responsible AI overlays from day one.

Conclusion: How to Use This Map to Build Your AI Strategy

This AI ecosystem map is a decision tool, not a poster. Use it to locate where your AI program is strong, where it is fragile, and what must be built next to scale responsibly.

1) Start with the Strategic AI Stack and be honest about dependencies. If infrastructure is constrained, model ambition will stall. If data is unreliable, model outputs will be untrustworthy. If models are selected without an enterprise operating model, pilots will multiply without compounding value.

2) Apply the Paradigm Lens early in every use-case discussion. Decide whether the system is symbolic, predictive, generative, agentic, or physical before you choose vendors, controls, or success metrics. This prevents category errors, such as expecting deterministic behavior from generative systems or underestimating the risk of autonomy in agent workflows.

3) Treat the Responsible AI Overlays as enablement, not friction. Security and trust controls reduce operational surprises. Governance clarifies ownership, risk tiering, and autonomy boundaries, enabling teams to move faster with fewer reversals. If you wait to add overlays until later, you pay for it with rework.

4) Sequence investments like a portfolio, not a one-time transformation. Pick a small number of high-impact workflows, instrument them with value and adoption metrics, and ensure each one has the minimum viable ecosystem behind it. Then scale what works, standardize what repeats, and retire what does not.

AI advantage does not come from adopting the newest model first. It comes from building a coherent ecosystem in which capability, trust, and business outcomes reinforce one another over time.

Notes, Sources & Corrections

Editorial Notes

This guide is written for executive and operator audiences who need a shared, practical map of the AI ecosystem. Concepts are explained in plain language and organized to support decision-making across strategy, technology, security, and governance. Where implementation topics are mentioned, they are intentionally scoped at a high level to preserve space for future deep-dive articles.

We aim to maintain consistent terminology across the NTechAI ecosystem model, including the Strategic AI Stack, Responsible AI Overlays, and the Paradigm Lens. If a term is evolving in the industry, we use the most widely recognized wording and note it where needed.

AI & Editorial Disclosure

This article was produced using AI-assisted drafting and research synthesis, followed by rigorous human review and editing for clarity, consistency, and reasonable accuracy.

This guide is not legal, regulatory, or security advice, and decision-critical requirements should be confirmed against official primary sources.

We do not present speculative claims as facts. When we reference frameworks or standards, we link to authoritative sources so readers can verify their scope and applicability.

Sources

- European Commission. (n.d.). EU Artificial Intelligence Act (EU AI Act). European Commission.https://digital-strategy.ec.europa.eu/en/policies/european-approach-artificial-intelligence

- National Institute of Standards and Technology. (2023). Artificial Intelligence Risk Management Framework (AI RMF 1.0). NIST.https://www.nist.gov/itl/ai-risk-management-framework

- Organisation for Economic Co-operation and Development. (2019). OECD principles on artificial intelligence. OECD. https://oecd.ai/en/ai-principles

- Stanford Center for Research on Foundation Models. (2023). On the opportunities and risks of foundation models. Stanford University.https://crfm.stanford.edu/report.html

- OWASP Foundation. (2023). OWASP Top 10 for Large Language Model Applications. OWASP. https://owasp.org/www-project-top-10-for-large-language-model-applications/

Corrections and Accountability

If you spot an error, outdated reference, or unclear explanation, please contact us and include the section title plus the sentence or paragraph in question. Substantive corrections will be reviewed promptly. If a correction is confirmed, we will update the article and note the change transparently in the “Last updated” line and in this section, as appropriate.

Rights and Attribution

© NTechAI. Unless otherwise stated, the structure of the NTechAI ecosystem model and the accompanying explanations are original NTechAI editorial work. You may quote short excerpts with proper attribution and a link to the original article. Republishing full sections, re-hosting the article, or presenting the model as your own is not permitted without written permission.